This article covers setting up HTTPS/SSL for an Amazon S3 Static Single Page Application using AWS CloudFront and AWS Certificate Manager on a domain hosted by GoDaddy.com.

Key Terms

AWS - Amazon Web Services

AWS S3 - Amazon Simple Storage Service is a service offered by Amazon Web Services that provides object storage through a web service interface. This is where our static SPA is hosted.

AWS Certificate Manager - a service that lets you easily provision, manage, and deploy public and private Secure Sockets Layer/Transport Layer Security (SSL/TLS) certificates for use with AWS services and your internal connected resources. SSL/TLS certificates are used to secure network communications

AWS CloudFront - a content delivery network offered by Amazon Web Services. It's how we create, and attach, the SSL certificate to our S3 bucket.

SPA - Single Page Application. In this case it will be a static Webpack-built ReactJS App.

This week I started on the topic of moving my project from http to https to make it more legit as I near a public "release". Why is moving to HTTPS important?

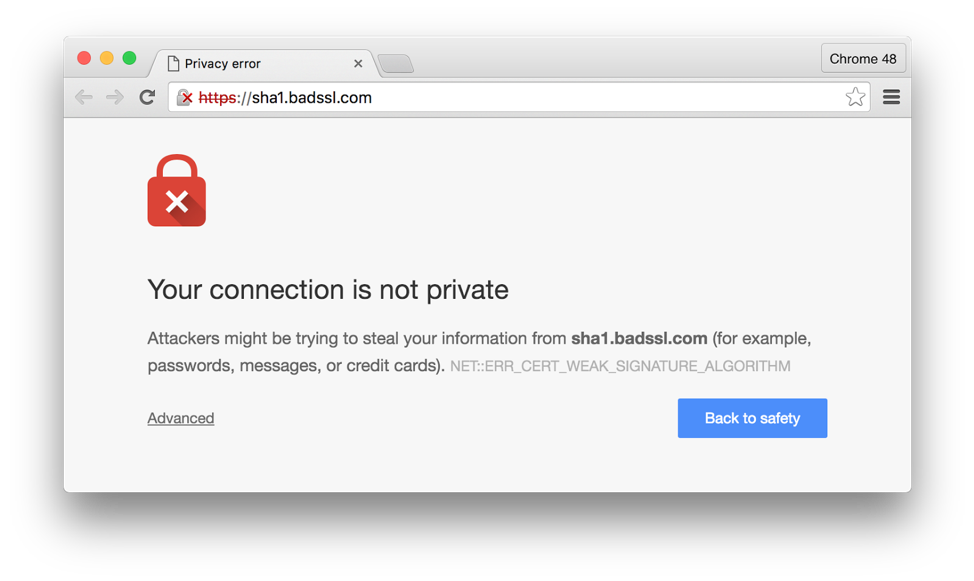

Reason 1: Google

The big driver for this need is Google, which is phasing support for HTTP connections out of Google Chrome. Today, Chrome warns users when they’re accessing a site HTTP, but doesn’t prevent it altogether. In some cases, they get a nasty warning which will likely scare away my users.

Reason 2: It’s the Right Thing to Do

HTTP connections reveal, at a minimum, full information about the routing and content of requests to everyone else on the local network. If your users are accessing your site in a coffee shop, no amount of server-side security will prevent you from leaking information in the stage between the user (and their router) and your site. Now I won't have any sensitive data, but it's a good practice to follow regardless.

So let's get started!

Step 1: Get a Cert

I initially all signs pointed toward https://letsencrypt.org/getting-started/ and the tool https://certbot.eff.org/. These tools looked great but as far as I could tell they aren't a good fit if you're deploying a static site running on Amazon S3 because you don't have SSH access as far as I know. Besides, AWS has its own tooling for setting up SSL certs.

Enter AWS Certificate Manager

Amazon Web Services (AWS) has great resources for issuing and using SSL certificates, but the process of migrating existing resources to HTTPS can be complex — and it can also require many intermediate steps. It ended up being more work than I had expected, but mostly because GoDaddy DNS and UIs are awful.

To get started you log into the AWS Certificates Manager console and request a certificate. They have 2 options for confirming your domain:

* CNAME Records

* Email

The documentation suggests the CNAME path is the better of the two long term, so that's what I did. They give you some CNAME records you must add to your DNS Management for your domain. In my case this is GoDaddy (But not for long hopefully).

I entered the CNAME records, set the TTL to 30 min, and then waited.... and waited.. and waited.

After 24 hours I started to get impatient. I started googling around and found this page which details an issue with the GoDaddy DNS system. The problem with GoDaddy is that they auto-append the domain name at the end of the "host" field when creating a CNAME record. Therefore, you are required to omit the apex domain from the "name" string provided to you by ACM when adding it to "host" on GoDaddy.

So if AWS gives you a record like:

RECORD NAME: _ho9hv39800vb302vejvnw3vnewoib3u.example.com.

RECORD VALUE: _cjhwou20vhu20uvoneouw20vuyb2ovb9.j9s73ucn9vy.acm-validations.aws.

Enter the following for GoDaddy:

NAME: _ho9hv39800vb302vejvnw3vnewoib3u

VALUE: _cjhwou20vhu20uvoneouw20vuyb2ovb9.j9s73ucn9vy.acm-validations.aws.

After doing so, I popped back over to AWS Cert Manager and hit refresh and after a couple of minutes, it went from pending to verified!

Step 2: Link with CloudFront

To connect your new cert with your S3 bucket you need to add a product to sit between your DNS & your S3 bucket. This product is AWS CloudFront

Go through the AWS Console and access the CloudFront product. Click to create a CloudFront distribution and select your AWS S3 Bucket as an origin and choose the cert we just created above.

After doing this, I tried to access the site via the CloudFront distribution Domain Name and it told me Access Denied.

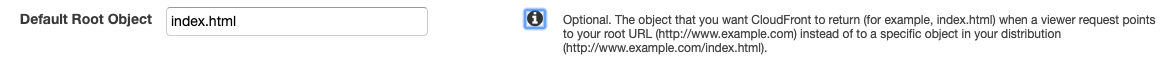

It looks like I need to specify the Default Root Object as index.html which is the root file inside my S3 bucket:

When I refreshed my page, it loaded the HTML document stored in the S3 bucket, but the page was blank. I checked the JS console and saw it immediately threw errors. client.js:653 Mixed Content: The page at 'https://d1krmsds175gpf.cloudfront.net/' was loaded over HTTPS, but attempted to connect to the insecure WebSocket endpoint 'ws://54.xxx.xx.49/'. This request has been blocked; this endpoint must be available over WSS.

I believe this is because my S3 bucket is now being served over HTTPS but the URI of my EC2 instance/api I'm trying to connect to is hardcoded to HTTP and :WS. I updated my FE to use WSS but it's telling me that my endpoint doesn't have WSS enabled still.

Watch Out! I learned that CloudFront has a built-in cache, so changes I was making to the S3 bucket weren't showing up when I refreshed the CloudFront URL. I ended up finding this post which describes going in and setting the Min/Max TTL. I'll need to make sure I set this back later to take advantage of caching.

To at least verify that this would work on HTTP, I bumped the CloudFront Distribution viewer Protocol Policy to: HTTP and HTTPS and loaded the page on HTTP and verified it worked.

Now I need to figure out how to get my AWS EC2 instance to use an SSL Cert. A cert typically needs to be applied to a domain, not an IP, which is how I'm accessing my API. So this needs to change I think.